There is an excellent discussion of Transparency in the Social Sciences at the Center for Effective Global Action. Anyone working with data in the social sciences should read and learn. I want to comment on one small bit that caught my attention in Gabriel Lenz’s post:

one of the many ways researchers can torture their data is with control variables.

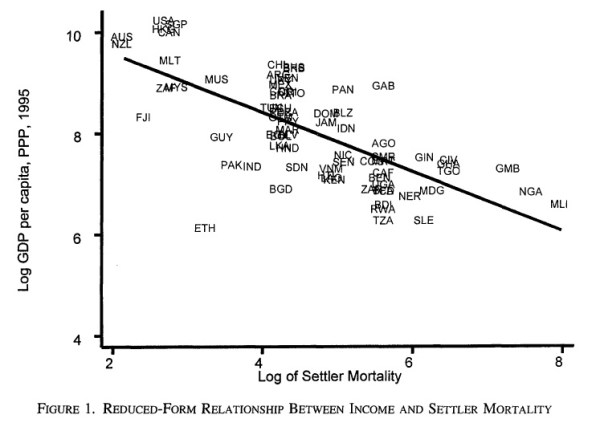

This is an important point. There is a clear preference emerging among applied researchers for findings that show up in unconditional correlations. We all want our findings to look something like this (from Acemoglu, Johnson, and Robinson):

Here is a nice bivariate correlation between settler mortality and income. The authors can add various control variables (malaria, etc.) to try to rule out confounders, but the results don’t change. The virtue is that these findings are simple, and the analysis is too: nothing is hiding behind an opaque statistical model. (Interestingly, they argue in Appendix A that including an exhaustive list of controls may be a bad idea; this is a separate issue.)

Such an approach has a lot to recommend it. I’ve used it myself, for example in figures 1 and 2 in this paper (PDF). But there is no general reason to prefer findings that show up in bivariate correlations when using observational data. I will try illustrate this through one of the classic questions in comparative political economy: the relationship between political institutions and economic growth.

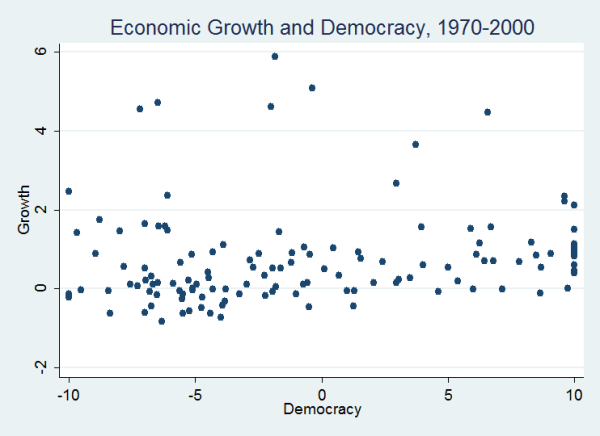

Here is a simple plot of the bivariate relationship between average level of democracy (1970-2000) and growth in real GDP per capita (1970-2000).

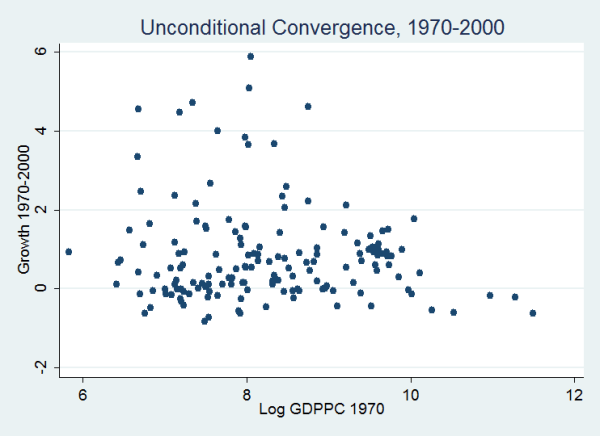

Not much to write home about. But even ignoring massive identification problems, we shouldn’t expect such a relationship in the unconditional correlations—this is because the Solow growth model predicts convergence: the growth rates of poorer countries will tend to be higher than those of wealthier countries for reasons having nothing to do with political institutions. And in fact, this relationship appears in the data.

This means that if we want to look for a relationship between political institutions and economic growth, it makes good theoretical sense to control for initial wealth levels in a multiple regression framework. Not because we are fishing, or to rule out potential confounders, but because there are simple theoretical reasons not to expect that we will observe an unconditional bivariate relationship between political institutions and economic performance. Compare, on this note, the following two regressions (t-statistics in parentheses).

| Model 1 | Model 2 | |

| Polity 1970 2000 | 0.03 | 0.04 |

| (1.72) | (2.17) | |

| Log GDPPC 1970 | -0.15 | |

| (-1.46) | ||

| Constant | 0.74 | 1.95 |

| (7.30) | (2.33) | |

| N | 134 | 134 |

Of course, the practice of adding atheoretical control variables to multiple regression analyses in order to produce nice p-values is all too common, and it is the worst kind of fishing. But this does not imply that the only “good” or “true” findings from observational data are the ones that show up in unconditional correlations.

Unfortunately, even sophisticated model selection and model averaging techniques cannot really tell us if control variables “truly belong” in the empirical model. It all depends on the theory and the data at hand.

UPDATE: Here is a useful comment from my friend and Cornell Government colleague Adam Seth Levine.

I think a lot of this comes down to robustness. In situations where we think that an unconditional correlation makes theoretical sense (but we also would like to improve the efficiency of our estimates etc.), it is useful to present that simple correlation along with a multivariate analysis. That’s the approach I’ve taken in all of my recent empirical work. In situations where we think that an unconditional correlation doesn’t make sense (like what you describe in your post) then you present it with the one or two moderators that are theoretically critical.

Comments

3 responses to “Control Variables and Complex Analysis”

Whether adding atheoretical controls to regressions is fishing depends on what you mean by fishing and what “atheoretical” means. In general, I think it’s a good idea to control for any (non-outcome) variable that just increases noise in the outcome variable. This useful exchange I had with Gabriel Rossman (with actual code and actual (fake) data!) helps to illustrate why:

Leaving noise in the LHS variable will inflate your standard errors and hence can lead to more bias in any finite-sample point estimate of the parameter you’re estimating.

Of course you still need theoretical reasons to think that a given covariate affects y.

Hi Jason–

Thanks for reading and commenting, for sharing that exchange. It’s useful, and a perspective that I hadn’t though much about before.

But have you read the Appendix A in the Acemoglu et al paper to which I linked above? If we are working in a world in which we aim to estimate causal effects using a regression-like procedure, then I bet that the procedure that you describe relies on the ability to argue that every one of these controls is strictly exogenous to the causal relationship between X and Y. (You’ve written “stupidcontrol1 is uncorrelated with x in expectation.”) Does that sound right?

Tom

Yes, you’re right that pure noise controls need to be uncorrelated with X (hence my referring to them as noise). Of course there’s another category of controls that do correlate with both X and Y. I think the AJR Appendix A derivation is just formally demonstrating that one should not control for outcomes (since z is driven by Y). That makes sense, as those aren’t really omitted variables, but it’s useful to have an actual proof.

To phrase this another way, we can’t have atheoretical controls for two reasons: 1) fishing for significance stars by playing with specifications; and 2) we need a reason to think they aren’t per se outcomes.